In this article, I'd like to show how much more efficient designing in the browser…

The Truth about Skeuomorphism vs. Flat Design

Medialoot

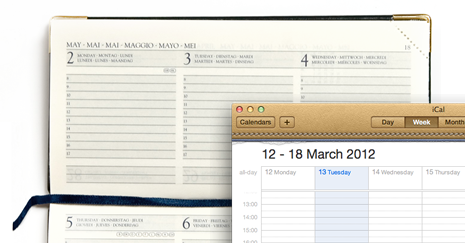

A classical example of skeuomorphism: Apple’s iCal mimicks texture and layout of a paper calendar, including torn edges, which do not have any function or interactivity.

A senior UI designer at Apple called skeuomorphism “visual masturbation”. He belongs to one of two camps at Apple representing different philosophies. The fight between these two camps peaked in the departure of Scott Forstall, the rise of Jony Ive to the chief designer for hard- and software, and the presentation of iOS7, which sparked an emotional public debate.

skeu·o·morph [skyoo-uh-mawrf]

an ornament or design on an object copied from a form of the object when made from another material or by other techniques, as an imitation metal rivet mark found on handles of prehistoric pottery.

What is this discussion really about? At the surface, followers of skeuomorphism seem to argue with supporters of flat design, but if we take a closer look at the backgrounds, the discussion has a much broader scope.

In this article, I will highlight origins and foundations of modern user interfaces in the following sections, and I will elaborate why I believe that this current discussion is misguided.

- A look back: the birth of the desktop metaphor

- Basics: cognitive psychology

- The problem with metaphors

- The evolution of interfaces as a result of a continuous dialog between user and designer

- An outlook and an objective assessment of the role of visual metaphors and skeuomorphic design

A Look Back

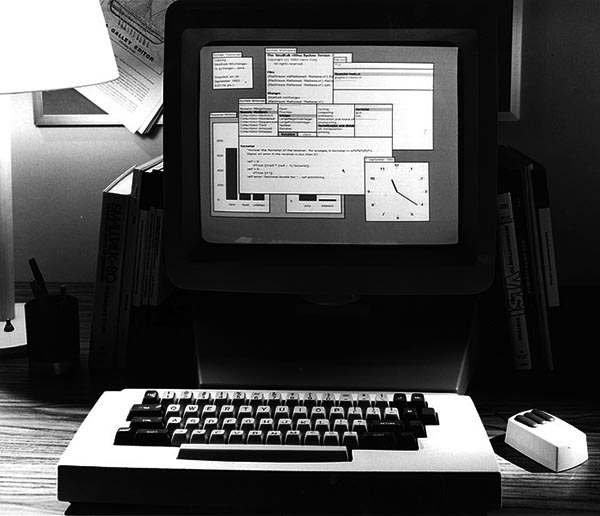

At the Palo Alto Research Center (PARC) in the 70s, Alan Kay developed a trendsetting computer, the XEROX Alto, which depicted the workspace as a conceptual metaphor of a desktop, and could be operated with the recently developed mouse.

digibarn.com

Building on and borrowing from Alto’s breakthrough concept, Apple started to build its desktop operating system for the Apple Lisa, and all current operating systems still use it, more or less, up to this day.

Basics: Cognitive Psychology

But why do designers try to mimick the physical world on computer screens?

Donald Norman, an american professor for cognitive sciences who has done pioneering work about human information processing and later about the psychology of human-computer interaction, offers possible explanations in his work.

In his most popular book, “The Design of Everyday Things”, he describes amongst other things the space of action, in which humans move when gathering and assessing information, and eventually decide to act (Interaction Magazine). This space of action is constrained essentially by the following parameters:

- physical constraints

- cultural, learned conventions

- logical constraints

Desktop computers have physical characteristics, which cannot be altered by the designer: they have a screen with a certain size and resolution, a keyboard, and a mouse with buttons, and so forth.

Learned, cultural conventions are acquired by a social group, and are unlearned slowly. A computer scrollbar, an element that did not exist before, is an example for a learned convention. Another cultural convention is the reading and writing direction of different languages, which defines our sense for positioning of more and less important elements. Those conventions can be ignored by the designer, but only at the expense of usability.

Humans are able to deduce actions from clues in their surrounding environment. It enables them to know when a task is finished, or how to discover hidden elements by scrolling down a page. Norman states that designers should consider this by designing a consistent conceptual model and by visualising the clues to support logical reasoning.

Norman’s most well-known and most misunderstood concept is that of affordance, a term which has originally been coined by the American psychologist J. J. Gibson.

Wikipedia

The depicted tea cups have a clear affordance for taking and holding them indicated by their shape and their handles. This “real” affordance is a property of the physical world and cannot be transposed to a computer screen. In relation to computer software, Norman speaks of “perceived” affordance and refers to properties, which can be perceived and interpreted by users.

While designers cannot manipulate physical constraints and cultural conventions, it is possible to consider cultural conventions and physical restrictions in their designs. If they choose to ignore these limitations, this decision will likely lead to problems with the correct interpretation of the designs.

The Problem with Metaphors

Many tasks can be modelled with software concepts in a much more efficient way than this would be possible in the real world, without these concepts having a real-world equivalent.

An example: entering text on touch screens via Swype can be up to 50% faster than on a standard on-screen keyboard. Why not make use of the efficiency the computer offers? And because there is no real world counterpart to Swype, alternatives to a skeuomorph visualisation must be found.

Sometimes, metaphors work well although they do not have a real world equivalent, e.g., e-mails and attachments. Both concepts did not exist in the real world (multiple recipients, CC) but could be modelled successfully using the post office and letter metaphor.

Also read Lis Pardi’s enlightening article on how well icons work on interfaces, “In Defense of Floppy Disks”, complete with a PDF overview of the survey results.

The desktop metaphor of modern operating systems is more problematic. It is not very efficient in depicting files and folders — other software concepts like trees and lists have proven to be more suitable.

The Evolution of Interfaces as a Dialog between Users and Designers

If the original iOS was built for a 45-year-old newbie, iOS 7 looks like it was designed for a tween

gizmodo.com

This quote highlights an important aspect: if the original iOS was intended to lead users to the then novel interaction concepts, Apple now builds on the experiences of the generation mobile and takes the learning curve into consideration.

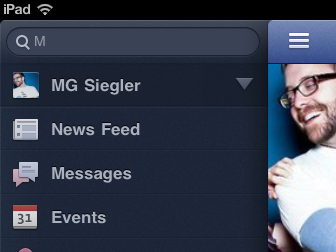

In the last five years, smartphones have become largely accepted, and with the design conventions introduced and made popular by iOS, a learning effect amongst its user base has taken place. Previously unknown elements have become quasi standards, e.g., think of the following UI pattern:

hack2learn

If I remember correctly, this interaction pattern surfaced for the first time in iPad apps (Tweetie, Facebook), and has become a quasi standard since. The users of touch screen devices know that a tap on the menu button scrolls away the main content to reveal a sidebar drawer. And a term has been coined quickly:

@viticci With a hamburger button!

— Marco Arment (@marcoarment) November 29, 2012

Design is in constant, mutual dialog with its users: users pick up successful designs, while discarding less successful ones. Designers evolve successful designs further, or create new patterns respectively, allowing new ways of usage. This steady exchange enables the evolution of user interfaces and new interaction models, e.g., touch and voice control with its latest manifestations Google Glass and smart watches.

Outlook

This evolution of the users is one of the reasons why Google, Facebook, Microsoft, and Apple abandon the skeuomorph paradigm: the advent of mobile usage combined with the widespread availability of touch interaction did not only change the look of user interfaces but also how and when humans interact with computers.

Another trend emerging with the recent updates of desktop operating systems is the diffusion of mobile concepts to desktop operating systems. Not only Windows but OS X as well, stands under the influence of its mobile counterpart iOS, even if Apple has not (yet) made a comparable, radical step like Microsoft did with Windows 8.

This development will certainly continue and aggravate, whilst the question “skeuomorph or flat” will disappear into the background because other aspects are more important when designing user interfaces and interactions, which eventually leads us back to the pillars of good design:

- clear labels are more important than three-dimensional buttons with gradients

- screen elements and fonts must be well legible and recognisable

- interaction must be based on a easily understandable conceptual model

- avoided superfluous elements and keep the additional benefit for the user in the eye, and

- put content at the center

The use of skeuomorphism should be considered along these basic rules, it is not a dogma with a simple binary answer.

By the way: Apple’s new iOS7 is not flat. It makes use of a number of skeuomorphisms and metaphors, e.g., textures, icons, and the impression of depth… read more about it in Matt Gemmell’s review of iOS7 and in the Interface Guidelines for iOS7.

Show all articles

[…] world. Metaphors in the virtual world don’t necessarily have to be in sync with the real world. Emails and attachments are best examples of that. They don’t have anything equivalent to them in real […]